With modern marvels of technology, where reality and simulation are almost indistinguishable, a new evil has been born – the child of deep learning and ill will.

Enter the mysterious world of deepfakes – where reality is rewritten, reputations are destroyed and trust becomes a rare commodity. With this journey into the tangled labyrinth of synthetic fraud, we now stand ready to reveal its secrets and unmask victims while arming ourselves with defenses against digital deceit.

Let’s understand what is Deepfake, how dangerous its impacts can be, and what defensive measures can be taken!

Decoding Deepfakes: Understanding the Technological Marvel and Its Global Impact

Deepfakes are a fusion of “deep learning” and “fake.” This is an artificial intelligence-based technological manipulation used to create forged video metadata with integrated audio stripes with high fidelity.

These digital manipulations have evolved to more extreme levels in recent times and present a great threat not only to individuals, and business entities but also political landscapes.

Impacts and Victims

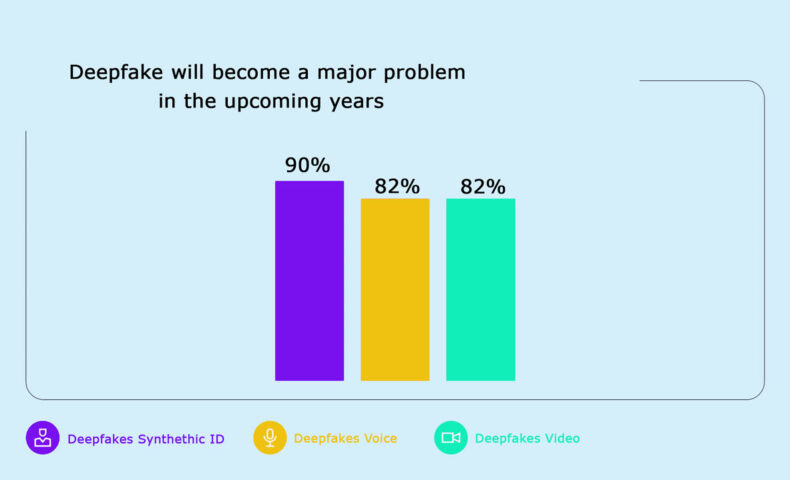

Deepfake is a huge threat to the society, which is multiplying at a humongous rate. By 2025, it is expected to have a market worth of $1.5 billion! You will be surprised to know that 60% of Americans believe that Deepfake will become a major problem to deal with in the upcoming years.

Shattered Reputations:

- Deepfakes wield the power to assassinate characters, targeting celebrities, politicians, and everyday individuals, leaving a trail of reputational wreckage.

- Comedian Sarah Silverman’s distressing encounter, with her likeness maliciously superimposed onto explicit content, stands as a testament to the chilling potential of deepfake attacks.

Financial Havoc:

- Corporate landscapes witness the impact, with CEO impersonation scams exploiting deepfakes and causing substantial financial losses. The €220,000 loss incurred by a German company in 2019 echoes the severity of the threat.

Political Chessboard:

- Elections become battlegrounds for manipulation as deepfakes disseminate fabricated speeches, interviews, or scandals, injecting an element of chaos into political landscapes.

Social Manipulation:

- Deepfakes emerge as potent tools for social engineering, fueling misinformation and sparking unrest. During the 2020 US elections, deepfakes attempted to leverage political figures to spread discord.

Unraveling the Deepfake Victims: A Global and Indian Perspective

From Indian Perspective,

- Celebrities: Malicious deepfakes that involve the use of faces belonging to many Bollywood celebrities such as Alia Bhatt, Deepika Padukone, and Priyanka Chopra are found in explicit videos. These events provoked tremendous emotional strain and reputational harm, showing the exposed nature of modern public individuals in a digital environment.

- Politicians: In Indian politics, deepfakes have been used to tamper with some of its politicians like Rahul Gandhi and Sachin Tendulkar through fake speeches and interviews. This raises issues of impacting elections and dividing people.

- Ordinary Citizens: Ordinary citizens have also not been spared from deepfakes. There are cases of revenge porn using deep-faked faces as well as blackmail with fabricated videos, revealing that strong legal systems and awareness campaigns should be implemented.

From a Global Perspective,

- Politicians: Deepfakes have also targeted leading figures such as Barack Obama, Donald Trump, and Angela Merkel who spread misinformation by manipulating public opinion.

- Business Leaders: Victims of deepfake scams include CEOs and executives whose voices or faces were used to trick employees into transferring money, revealing confidential information, etc. This proves how exposed businesses are in regards to cyber-attacks which is why strong cybersecurity is required.

Why must cybersecurity leaders prepare for deepfake phishing attacks in the enterprise?

Cybersecurity leaders must be vigilant against deepfake phishing attacks in enterprises due to advancing AI technologies and the wealth of personal data on social media. Deepfake phishing, using fabricated audio and video, poses significant risks, exemplified by a case where a CEO was deceived into transferring $243,000.

With real-time and nonreal-time attack vectors, CISOs must prioritize ongoing, engaging training for users, teaching them to recognize visual cues and adopt caution. Implementing strategies like “hit pause” and challenging the legitimacy of interactions equips organizations to mitigate the escalating threat of deepfake phishing in the evolving landscape of cyber threats.

The Surge in Deepfake Cybercrime: Navigating Legal Ambiguities in the Indian IT Act, 2000

The uptick in deepfake cybercrime results from technological leaps, tool accessibility, and a glaring legal void.

The Indian IT Act, of 2000, lacks specificity in dealing with deepfakes, relying on sections like 66D (cheating by personation) and 67C (unlawful interception of electronic mail) in certain cases. Recognizing the need for comprehensive measures, the Indian government is contemplating amendments to the IT Act.

Detection Technologies and Challenges

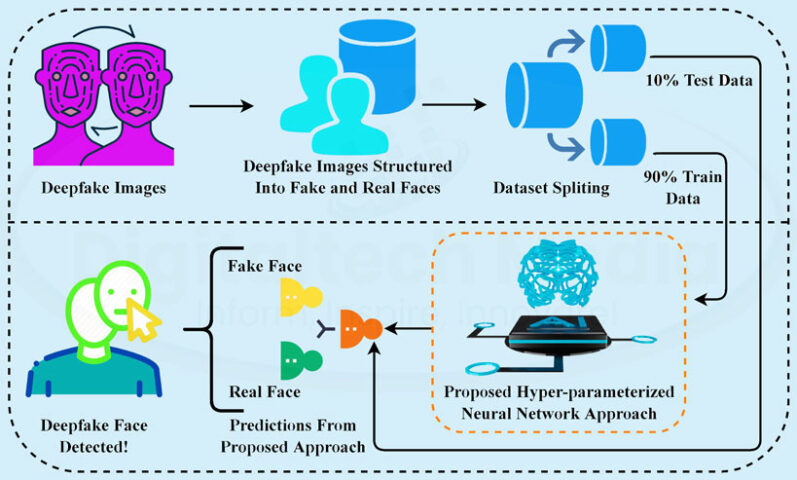

- Cybersecurity leaders have to be prepared for deepfake phishing attacks, using the developing detection technologies studying pixel details, lip movements, and speech characteristics.

- The gaps in the legal framework, particularly the lack of specific provisions for deepfakes under the IT Act 2000 emphasize the need for amendments to laws.

- Organizations require proactive cybersecurity strategies that integrate technological defenses and legal barriers to combat the diverse threat posed by deepfakes.

- To stay ahead in the battle against deepfakes, invest wisely in cutting-edge detection technologies that examine not only pixels but also sophisticated lip movements and speech patterns.

Conclusion

As we go deeper into this digital darkness, the disclosures of deepfakes require our united attention. The connection between sophisticated technology and bad intentions is a significant challenge that requires all hands on deck to defeat this artificial threat.